A Split Voice Stack Aimed at Real-Time Applications

OpenAI has introduced a trio of OpenAI real-time voice models—GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper—through its Realtime API, signaling a shift from single, general-purpose voice systems to a split stack tuned for specific workloads. Instead of forcing one model to handle reasoning, translation, and transcription, OpenAI now offers separate lanes so teams can decide where they need depth and where they need speed. GPT-Realtime-2 focuses on live reasoning, GPT-Realtime-Translate on live voice translation AI, and GPT-Realtime-Whisper on low-latency transcription. This design aims to reduce orchestration complexity for developers building live assistants, call flows, and tool-using voice agents that must keep speaking, handle interruptions, and maintain context. The result is a platform where voice app development can move beyond simple conversational interfaces toward operational voice layers embedded directly into apps and workflows.

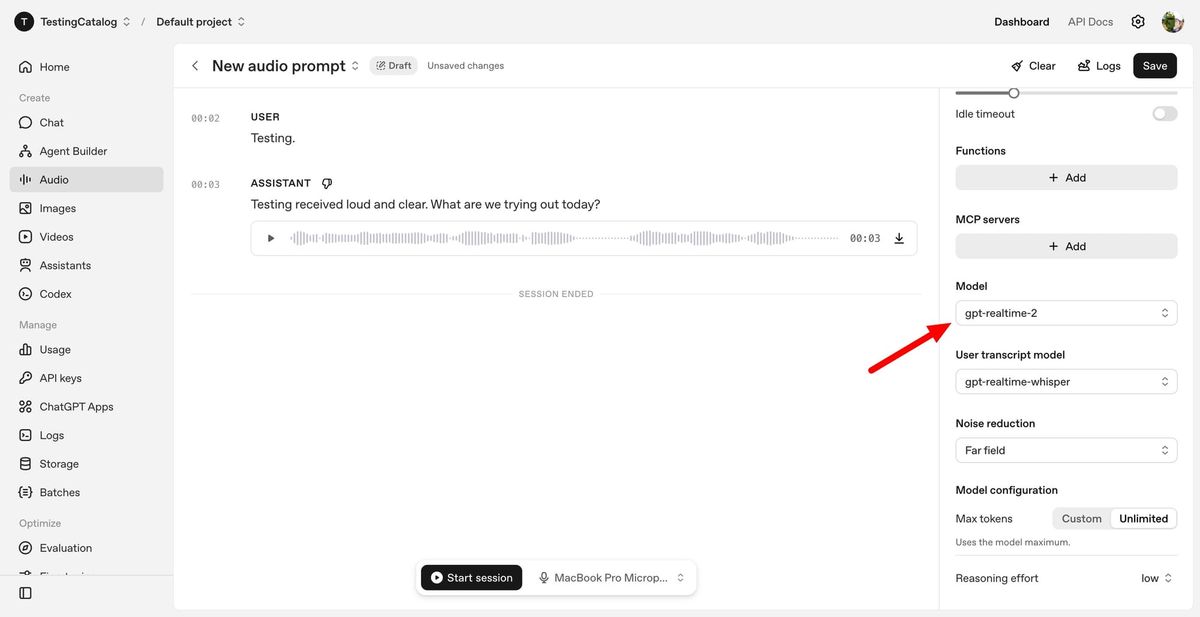

GPT-Realtime-2: GPT-5-Class Reasoning for Live Voice Agents

GPT-Realtime-2 is the centerpiece of OpenAI’s new lineup, described as delivering GPT-5-class reasoning for spoken conversations. Designed for live voice interactions, it enables applications to manage interruptions, corrections, and shifting context while continuing to talk naturally, rather than falling back to rigid call-and-response behavior. The model supports short spoken preambles like “let me check that” while it performs actions via parallel tool calls, keeping conversations feeling human and continuous. OpenAI has expanded the context window from 32K to 128K tokens, allowing voice agents to track longer, more complex workflows and histories. Developers can tune reasoning effort from minimal to xhigh, trading off latency against depth for complex tasks such as multi-step troubleshooting or tool orchestration. According to OpenAI, GPT-Realtime-2 improves on earlier versions in audio intelligence, instruction adherence, context management, and live conversation control, making it a core engine for next-generation voice-driven agents.

GPT-Realtime-Translate: Live Multilingual Conversations at App Speed

GPT-Realtime-Translate targets developers building live multilingual voice products, from customer support lines to education platforms and event tools. The model accepts speech in more than 70 input languages and delivers output in 13 languages, while keeping pace with the speaker in real time. It is designed to handle regional pronunciation, fast context switches, and domain-specific terminology without losing meaning. This enables live voice translation AI that feels less like a delayed relay and more like a shared conversation layer between participants. For businesses, it opens paths to cross-border sales, media localization, and creator tools that can instantly adapt content for global audiences. Combined with GPT-Realtime-2, developers can build voice agents that not only translate but also reason about user requests, retrieve data, and trigger workflows—all while speaking naturally in multiple languages during a single session.

GPT-Realtime-Whisper: Streaming, Low-Latency Transcription

GPT-Realtime-Whisper brings streaming speech-to-text capabilities into the same Realtime API, giving developers a low-latency transcription layer for live environments. The model transcribes audio as people speak, making it suitable for live captions, meeting notes, customer calls, and voice-driven workflows where text must appear while the conversation is still in progress. By treating transcription as a dedicated workload, OpenAI allows teams to pair GPT-Realtime-Whisper with GPT-Realtime-2 or GPT-Realtime-Translate, depending on whether they need downstream reasoning or translation. This modular approach lets developers capture high-quality text streams without overloading a heavier reasoning model. As voice increasingly becomes an operational interface for apps, GPT-Realtime-Whisper provides the text backbone needed for logging, analytics, compliance, and integration with existing systems, while still supporting real-time user experiences.

From Simple Chatbots to Voice-First Workflows

Together, the three models push voice AI beyond simple conversational chatbots toward real-time, task-oriented systems. GPT-Realtime-2 is built for “voice-to-action” scenarios where users speak naturally while the AI handles tasks like searching, filtering, or scheduling in the background. In “systems-to-voice” and “voice-to-voice” patterns, apps can use the models to narrate system events, coordinate tools, and maintain fully spoken interactions. OpenAI positions this stack as infrastructure for live assistants, customer-support flows, and enterprise voice agents that must hold context across long sessions, interruptions, and tool hops. Developers gain fine-grained control: they can apply deep reasoning only where needed, keep translation and transcription lightweight, and design voice app development workflows that feel continuous and reliable. As platforms integrate more voice interfaces, these OpenAI real-time voice models aim to make voice a first-class operational layer rather than a novelty add-on.