From Free-Form Prompts to Spec-Driven Coding

AI code generation began with “vibe coding”: developers tossed large, open-ended prompts at models and iterated on whatever came back. That style is fast but fragile—small misunderstandings snowball into rework, and teams struggle to review what an AI actually intended to build. GitHub and OpenAI are now converging on a different pattern: spec-driven coding via structured workflows. Instead of improvising from a single prompt, new tools front-load specification, planning, and task breakdown before any code is written. This shift mirrors how mature engineering teams already work, but makes those steps explicit and machine-readable so agents can participate safely. The result is less magic, more process: models follow plans, developers review artifacts, and code review checkpoints are built into the flow. As AI coding scales across organizations, this move from ad-hoc prompting to plan-based automation is becoming less an experiment and more a default operating model.

GitHub’s Spec-Kit Turns Ideas into Specs, Plans, and Tasks

GitHub’s Spec-Kit brings a full spec-driven workflow to AI-assisted development. The open-source toolkit centres on a Specify CLI plus templates and helper scripts that transform feature requests into structured specs, technical plans, and task lists before implementation. Its staged pipeline—Specify, Plan, Tasks, Implement—pushes teams to define product scenarios, outline architecture, and slice work into assignable units before any AI code generation begins. Six primary slash commands cover constitution, specification writing, planning, task breakdown, issue conversion, and implementation, with optional clarify, analyze, and checklist commands to catch gaps and inconsistencies early. Critically, every stage creates tangible artifacts that can be inspected in normal code review checkpoints, giving managers and peers a clear view of intent and trade-offs. With over 90,000 GitHub stars and more than 8,000 forks, Spec-Kit signals strong interest in repeatable, structured workflows that integrate AI agents without bypassing standard engineering controls.

OpenAI’s Symphony Orchestrator Automates Tickets Until Merge

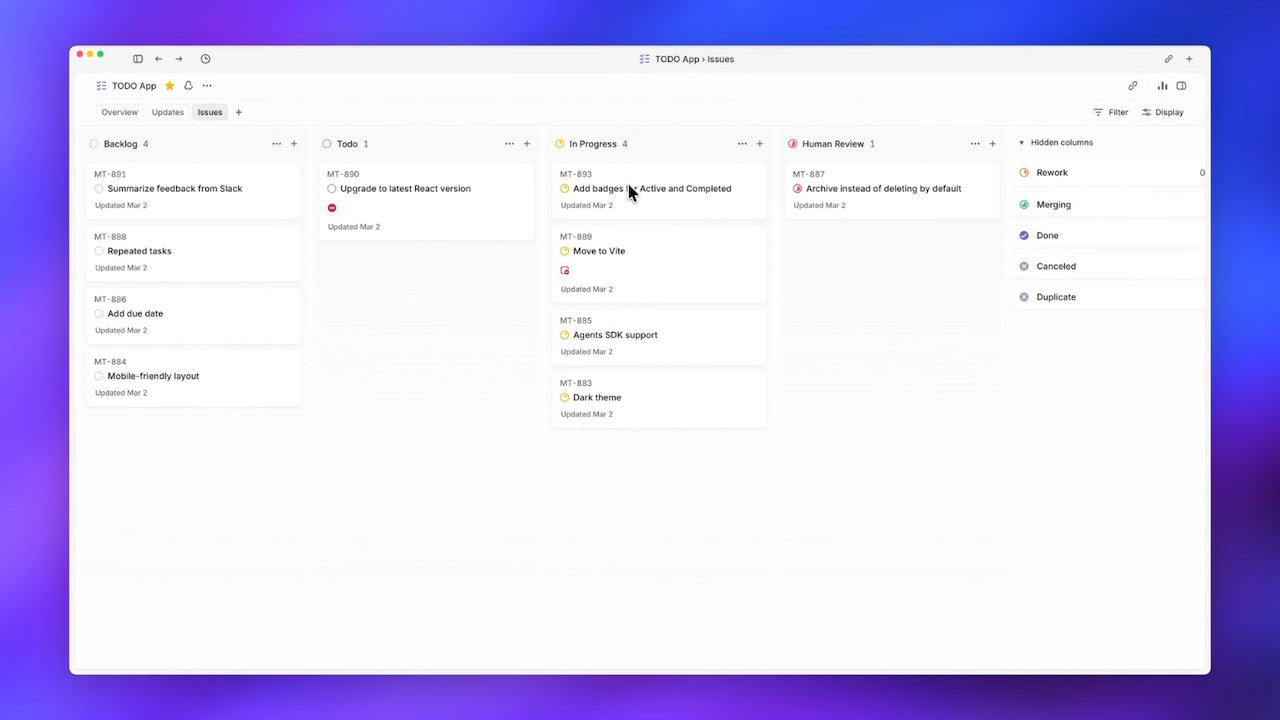

OpenAI’s Symphony tackles a different bottleneck: human supervision of parallel AI agents. Internal Codex teams found it impractical to monitor more than three to five agents at once, as context switching erased productivity gains. Symphony responds by turning the ticket system—specifically Linear—into the dispatcher and supervisor. Under the spec, each open ticket spawns its own Codex agent and dedicated workspace, running autonomously until the work is merged. Symphony treats Linear as a state machine, moving tickets through Todo, In Progress, Review, and Merging, while automatically respawning agents that crash or stall. Agents build a task dependency tree and execute across that DAG, enabling natural parallelism and even spanning multiple repositories or pure research tickets. OpenAI reports a sixfold increase in merged pull requests in the first three weeks of internal use, illustrating how orchestrated, spec-driven coding can unlock throughput once human attention is no longer the central gate.

Why Structured Workflows Improve Quality and Oversight

Both Spec-Kit and Symphony reflect a common realization: AI code generation needs structure to be safe and scalable. In GitHub’s model, specifications, plans, and task files create reviewable checkpoints before agents touch the codebase. Clarification and checklist commands function as guardrails, allowing architects and leads to stop or adjust work long before a pull request appears. This turns spec-driven coding into a collaborative loop rather than a black-box prompt. Symphony extends the idea into operations, encoding workflow states and task dependencies so orchestration is predictable, auditable, and recoverable when agents fail. Together, these approaches reduce the risk of rogue changes, align agents with product intent, and keep humans in the loop at key decision points instead of micromanaging every step. For teams wary of handing control to AI, structured workflows offer a compromise: automation handles repetition and coordination, while people own specifications, priorities, and code review checkpoints.