Musk’s Grok AI Lip Sync Moment: When “Nothing in This Video Is Real” Still Feels Real

When Elon Musk posted a short Grok-generated clip on X with the caption “Nothing in this video is real,” it drew millions of views within hours. The viral post showcased Grok Imagine’s improved lip sync, running on the Grok 4.3 Beta model, which significantly upgrades synchronized mouth movements and temporal consistency in AI generated videos. That realism matters more than the flashy tech specs. For many casual viewers, the usual giveaway that a clip is fake – awkward lips or mismatched audio – is now largely gone. Grok Imagine is already generating massive volumes of content, driven by a powerful training setup and rapid update cycle. As the cost and friction to create photorealistic talking-head clips drops, a single convincing video shared by a high-profile account becomes a live stress test for how societies handle AI video misinformation, long before regulations or norms fully catch up.

From Cherry Blossoms to Clicks: Inside a Profit-Driven Fake Video Machine

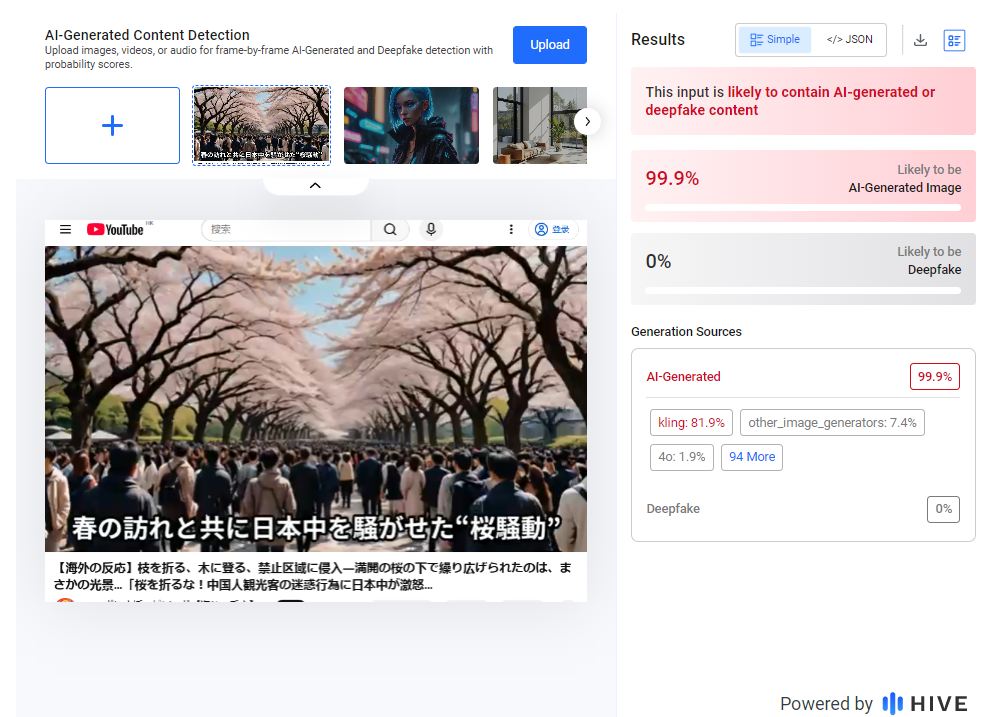

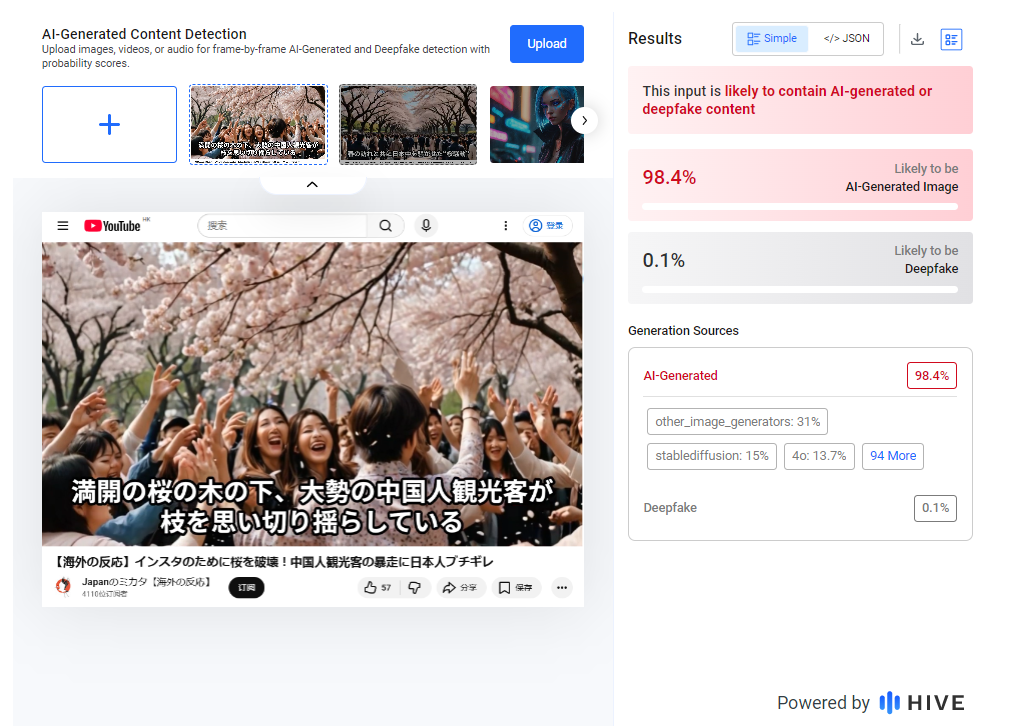

While Musk’s demo was clearly labelled, other AI generated videos are not. An investigation into Japanese crowdsourcing platform CrowdWorks uncovered large numbers of paid orders to mass-produce anti-China fake videos. These clips, posted by YouTube channels focused on “overseas reactions,” accused Chinese tourists of damaging cherry blossoms during peak viewing season. Visual analysis and AI detection tools found telltale signs of synthetic imagery: deformed fingers and faces, overly smooth skin, “melted” limbs in crowded scenes, repetitive cherry blossom textures and unnaturally dense crowds. Hive Moderation flagged some frames as more than 98% likely to be AI-generated. Crucially, creators admitted the stories were fabricated from scratch, pointing to a commercialised rumour industry built for clicks and ad revenue. Such fake political videos and tourism scare stories can inflame public anger, fuel xenophobia and nudge audiences toward boycotts – even when the underlying events never happened.

Why This Matters for Malaysia: From Tourism Backlash to Regional Politics

For Malaysian viewers, these examples are not distant curiosities. If AI tools can cheaply fabricate convincing scenes of foreign tourists misbehaving, the same approach can target regional neighbours, local communities or even Malaysian public figures. AI video misinformation could be weaponised to show “evidence” of bad behaviour at local festivals, environmental damage by a particular group, or supposedly offensive remarks by politicians and influencers. In a multicultural, tourist-dependent country, such clips can quickly escalate into calls for boycotts, diplomatic friction or social tensions. The cherry blossom case shows how easily mixed-source edits and synthetic frames can be stitched into persuasive narratives that feel authentic. As Grok AI lip sync and similar tools remove obvious visual glitches, the risk increases that Malaysians will encounter slick fake political videos or viral rumours that spread faster than corrections, especially in WhatsApp groups and local-language social feeds.

Who Owns “Truth” in an AI World? From Fact-Checkers to the Vatican’s Moral Push

Newsrooms, civil-society groups and platforms are racing to respond with fact-checking initiatives, AI content labels and new policies around synthetic media. Some propose watermarking and authenticity standards to help deepfake detection tips work at scale. Beyond technical fixes, there is a wider debate over who gets to arbitrate truth. The Vatican has emerged as an unusual voice in this conversation, warning of a “crisis of truth” driven by AI-generated content. It has introduced formal AI guidelines that insist technologies must be ethical, transparent and human-centred, and must never overtake or replace humans. The rules explicitly ban manipulative or discriminatory AI uses. Speculation about a Vatican “truth engine” reflects a broader search for trusted referees in an age of AI video misinformation. Yet even the Vatican is moving cautiously, stressing that tools alone cannot substitute for human judgment, ethics and media literacy.

A Practical Checklist for Malaysians – and Why Honest Creators Must Step Up

Malaysian users can protect themselves with a simple checklist. First, verify the source: who posted the video, and is it a known news outlet, official channel or anonymous account? Second, search for corroboration on reputable news sites or official statements. Third, try reverse image or video search to see if frames appear elsewhere with different captions. Fourth, be sceptical of metadata screenshots; titles and timestamps can be edited. Finally, look for contextual clues: unnatural crowds, odd body parts, repetitive backgrounds, or narration that never shows the claimed event clearly. On the creator side, ethical disclosure matters. Brands, media outlets and influencers mixing AI and real footage should clearly label synthetic elements and, where possible, use visible or invisible watermarks. Following emerging standards voluntarily – like Musk’s explicit “Nothing in this video is real” framing – helps build trust before stricter regulations arrive.