When Clean Code Hides Bugs No One Understands

In many teams, pull requests from juniors look better than ever: tests are green, style is consistent, and features ship fast. Yet reviewers are increasingly finding subtle defects, such as timing bugs that only appear under rare conditions, buried inside otherwise polished changes. The twist is that the junior developer often cannot explain why the code is wrong—or even how it works—because much of it was generated by AI coding tools. These tools optimize for rapid output, not for developer comprehension or long-term learning. For seniors, who bring years of architectural context, that imbalance is manageable; they can critically filter suggestions. For new developers, it is the whole problem: code appears before understanding has formed. As a result, output and expertise are being decoupled, and debugging skills are not keeping pace with the apparent productivity gains.

The Rise of the AI-Empowered ‘Expert Beginner’

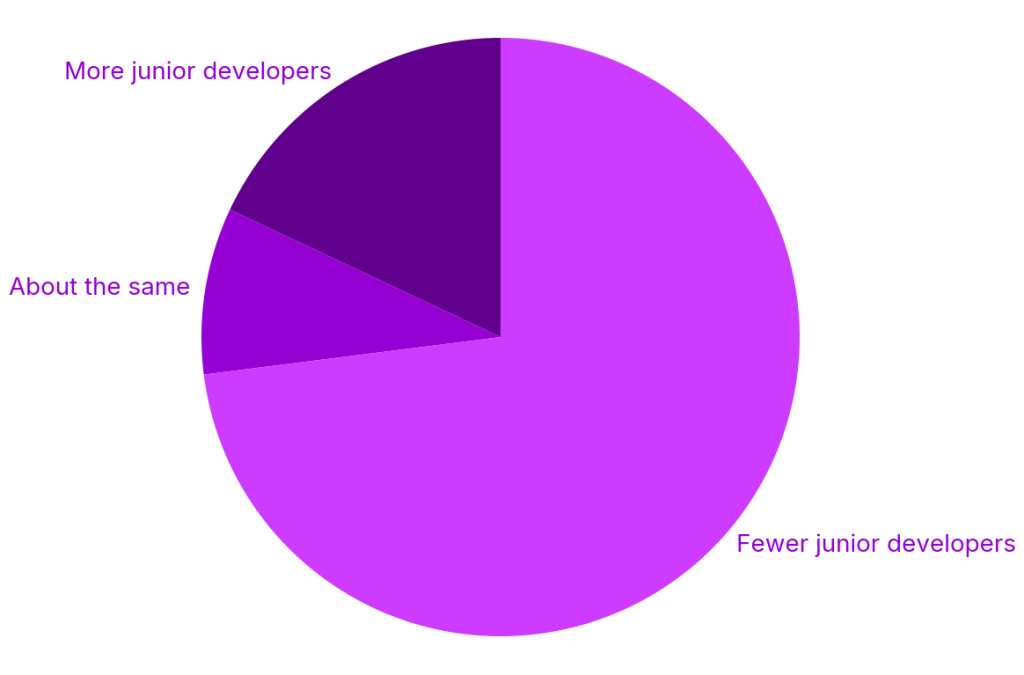

Engineering managers describe a new kind of “expert beginner”: conscientious juniors who move quickly with AI assistance and produce clean code that passes review, but cannot articulate the reasoning behind their implementations. Industry research suggests why this pattern is spreading. Across surveyed organizations, juniors are completing tasks up to 55% faster with AI assistance, while 73% of organizations have reduced junior hiring over the past two years. With fewer traditional early-career roles and less structured mentorship, new developers are pushed toward tool-centric workflows that emphasize speed. Their open-mindedness makes them eager adopters of AI coding tools, but their limited experience leaves them poorly equipped to evaluate or debug AI-generated solutions. The result is a widening oversight gap during code review: seniors must not only check correctness, but also reverse-engineer unfamiliar patterns that juniors themselves cannot explain.

Code Review Challenges and the Debugging Skill Gap

These dynamics are reshaping code review. What used to be a dialogue about trade-offs and design is increasingly a forensic exercise in validating opaque AI output. Reviewers encounter pull requests where every individual change looks reasonable, yet emergent bugs appear only under specific race conditions or edge cases. When questioned, AI-reliant juniors often struggle to step through the logic or design targeted tests, revealing gaps in core developer debugging skills. This raises practical concerns: who is responsible when something goes wrong that no one fully understands? Teams also worry about how to cultivate engineers who can own complex systems if their early years are spent supervising AI, rather than learning to reason through problems themselves. The “seniors with AI” model may boost short-term throughput, but it risks hollowing out the experiential ladder that sustained engineering quality in the past.

AI Agent Limitations Emerge in Long and Complex Tasks

New findings from Microsoft researchers highlight how AI agent limitations compound these issues. In the DELEGATE-52 benchmark, which simulates multistep workflows across 52 professional domains, even top frontier models performing delegated knowledge work degraded document quality over time. On average, they lost around a quarter of content across 20 interactions, with catastrophic corruption occurring in the majority of model–domain combinations. The models performed relatively better on programming tasks than on natural language scenarios, but the pattern was consistent: long-running tasks magnified small errors into large failures. For teams hoping to hand off complex debugging or refactoring to AI agents, this is a red flag. When workflows stretch across many steps, the tools that generate code quickly can also introduce hard-to-detect corruption or subtle logic regressions—exactly the kinds of problems that require strong human debugging skills to catch and correct.

A Fragile Talent Pipeline in an AI-First Future

Behind the debugging crisis lies a weakening talent pipeline. Entry-level tech job postings have fallen sharply, internships have declined, and more than half of so-called entry roles now demand prior experience. At the same time, organizations are investing heavily in AI coding tools and AI-assisted workflows, often at the expense of structured mentoring. The implicit bet is that “fewer juniors plus more AI” can replace traditional apprenticeship models. Yet if AI coding tools separate output from understanding, sustainable developer growth becomes a strategic risk. Teams will need deliberate practices—such as pairing, guided code review, and requiring humans to explain AI-generated changes—to rebuild the learning loop. Otherwise, the industry may end up with faster short-term delivery but a shrinking pool of engineers who can debug, design, and safely supervise the very AI systems on which modern software development now depends.