From Freeform Prompts to Spec-Driven AI Coding Agents

AI coding agents are moving away from improvising entire features from a single prompt and toward structured, spec-driven workflow design. GitHub’s Spec-Kit, OpenAI’s Symphony, and Anthropic’s Petri 3.0 each embody this shift. Instead of treating code generation tools as autonomous coders, these platforms ask teams to formalize intent, constrain behavior, and weave human oversight into the loop. Specs, plans, tickets, and test harnesses now play a central role in how agents operate. The trend reframes AI assistance as a disciplined extension of existing engineering practices rather than a replacement for them. For developers, the message is clear: high-quality automation depends less on model size and more on the surrounding process—how work is decomposed, how it is reviewed, and how AI alignment testing is embedded in CI/CD and project management systems.

GitHub Spec-Kit: Plan–Task–Review Before Code Generation

GitHub’s Spec-Kit brings a formal spec-driven workflow to AI coding agents by forcing structure before any code is generated. The toolkit uses a Specify CLI plus templates and helper scripts to move from feature ideas to written specs, project plans, task lists, and only then implementation. Six slash commands cover constitution drafting, specification writing, planning, task breakdown, issue conversion, and implementation, while optional clarify, analyze, and checklist commands nudge teams to close information gaps and reinforce review steps. This approach turns AI coding into a multi-stage pipeline with explicit checkpoints, favoring slower but more predictable progress over freeform prompting. Early adoption signals are strong, with tens of thousands of stars and thousands of forks, but GitHub notes practical constraints: teams must still manage installation paths, Python dependencies, and extension drift before scaling Spec-Kit across their engineering organizations.

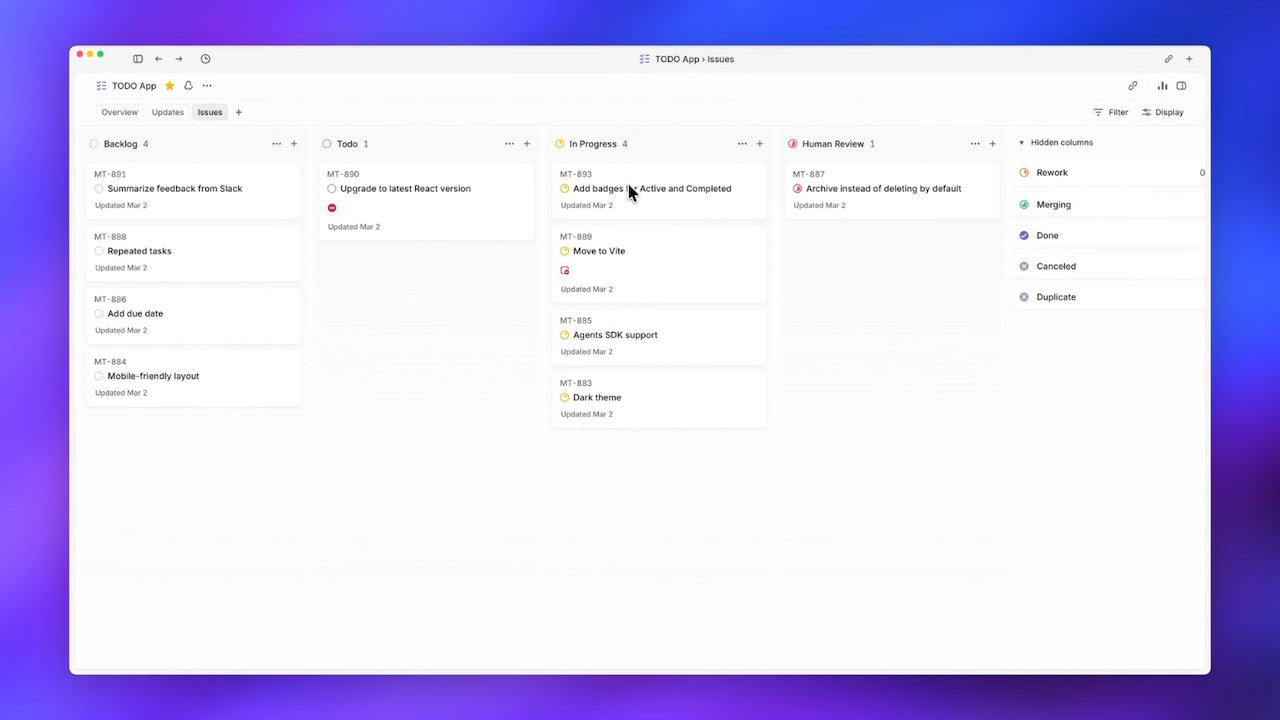

OpenAI Symphony: Ticket-Driven Orchestration for Codex Agents

OpenAI’s Symphony tackles a different bottleneck: human attention as teams scale AI coding agents. Symphony is an open-source specification and reference implementation that lets Codex-based agents pull tickets directly from Linear and run until their work is merged. It treats Linear as a state machine, assigns each ticket its own agent, and respawns agents that crash mid-task so progress continues without constant supervision. By removing engineers from the dispatch loop and binding agents tightly to project management, OpenAI reports a sixfold increase in merged pull requests on internal teams over the first three weeks. Symphony is shipped as an Elixir reference, not as a fully supported product, but it has already inspired forks that port the orchestration pattern to other models and issue trackers. The result is a repeatable, spec-driven workflow that hooks AI coding into existing backlog and PR pipelines.

Anthropic Petri 3.0: Modular Alignment Testing for Production Systems

Anthropic’s Petri 3.0 focuses less on writing code and more on AI alignment testing for systems already in deployment. The update introduces a structural split between auditor and target models, allowing teams to tune the judge and the system under evaluation independently. This modular auditor–target architecture reduces the risk that a single fixed setup will overfit to one testing style or conceal important behavioral differences between models and environments. Petri 3.0 also adds new behavior-checking mechanisms, including Dish and Bloom-based evaluations, making the toolkit more production-aware and better suited to targeted, spec-driven behavior checks. Anthropic handed Petri to Meridian Labs at the same time, positioning it within a broader open evaluation stack that includes tools like Inspect and Scout. For developers, Petri becomes a practical way to plug structured alignment tests into CI/CD and audit workflows around AI-driven features.

A Converging Pattern: Structured Workflows and Human Oversight

Taken together, Spec-Kit, Symphony, and Petri 3.0 outline a shared pattern for modern AI coding agents: structured workflows, explicit specs, and continuous human oversight. GitHub channels feature requests into layered plans and tasks before agents write code. OpenAI binds agents to ticket lifecycles and pull-request states, emphasizing orchestration over autonomy. Anthropic, through Petri, pushes organizations to treat AI behavior as an object of continuous, production-grade evaluation. None of these platforms advocate completely autonomous code generation tools; instead, they embed AI into project management, version control, and testing pipelines that developers already trust. Teams can now integrate spec-driven workflow steps into Linear, GitHub issues, or other systems while enforcing alignment checks in CI/CD. The emerging lesson is that impactful AI coding depends less on letting agents run wild and more on constraining them with well-specified goals, measurable tests, and reviewable artifacts.